Run Llama 2 70b On Your Gpu With Exllamav2

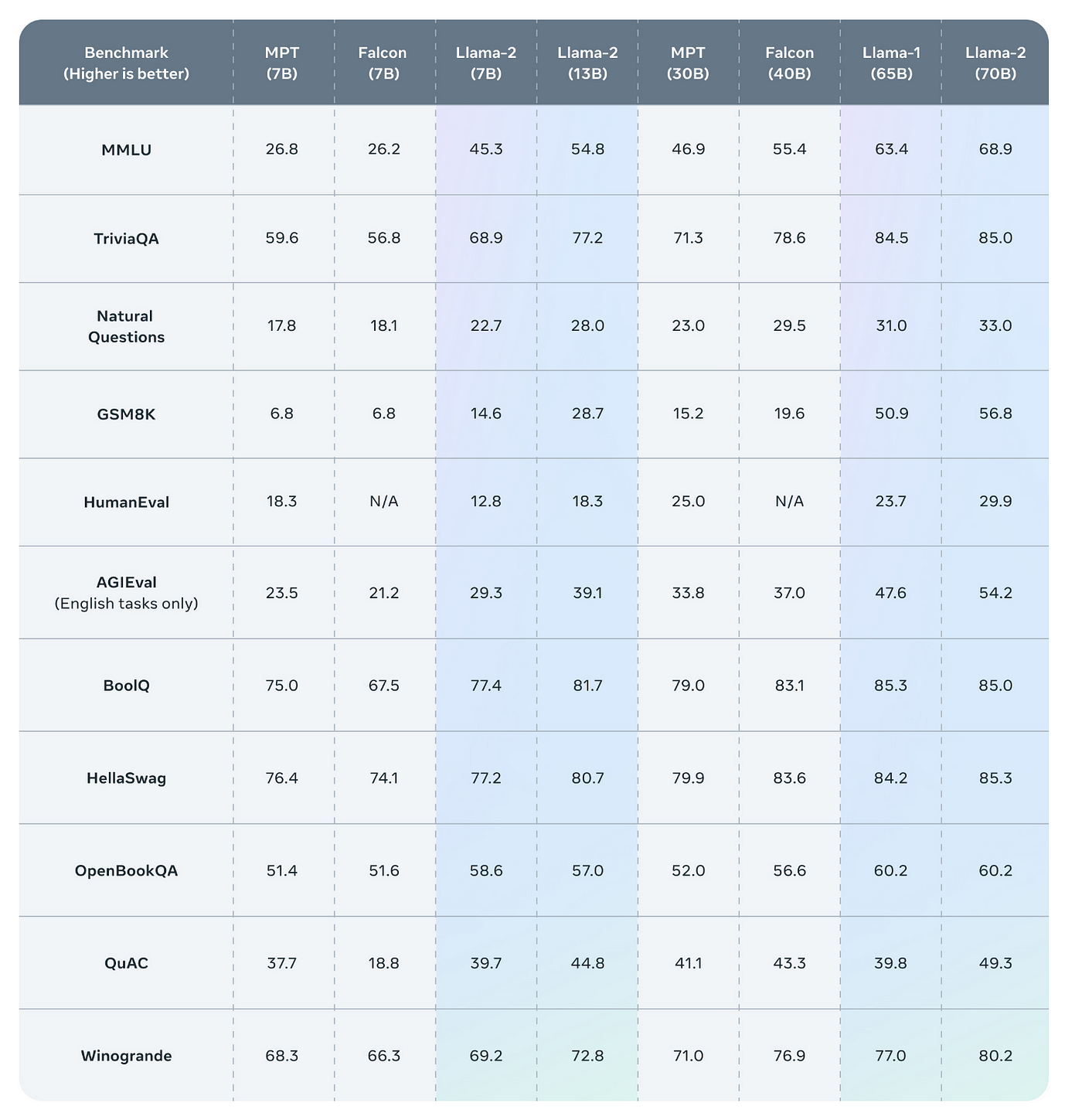

LLaMA-65B and 70B performs optimally when paired with a GPU that has a minimum of 40GB VRAM. More than 48GB VRAM will be needed for 32k context as 16k is the maximum that fits in 2x 4090 2x 24GB see here. Below are the Llama-2 hardware requirements for 4-bit quantization If the 7B Llama-2-13B-German-Assistant-v4-GPTQ model is what youre after. Using llamacpp llama-2-13b-chatggmlv3q4_0bin llama-2-13b-chatggmlv3q8_0bin and llama-2-70b-chatggmlv3q4_0bin from TheBloke MacBook Pro 6-Core Intel Core i7. 1 Backround I would like to run a 70B LLama 2 instance locally not train just run Quantized to 4 bits this is roughly 35GB on HF its actually as..

No description website or topics provided GitHub is where people build software More than 100 million people use GitHub to discover fork and contribute to over 420 million projects. Clearly explained guide for running quantized open-source LLM applications on CPUs using LLama 2 C Transformers GGML and LangChain n Step-by-step guide on TowardsDataScience. Contribute to PacktPublishingLangChain-MasterClass---Build-15-OpenAI-and-LLAMA-2-LLM-Apps-using-Python development by creating an account. Getting started with Llama-2 This manual offers guidance and tools to assist in setting up Llama covering access to the model hosting instructional guides and integration. LangChain is a powerful open-source framework designed to help you develop applications powered by a language model particularly a large language model LLM..

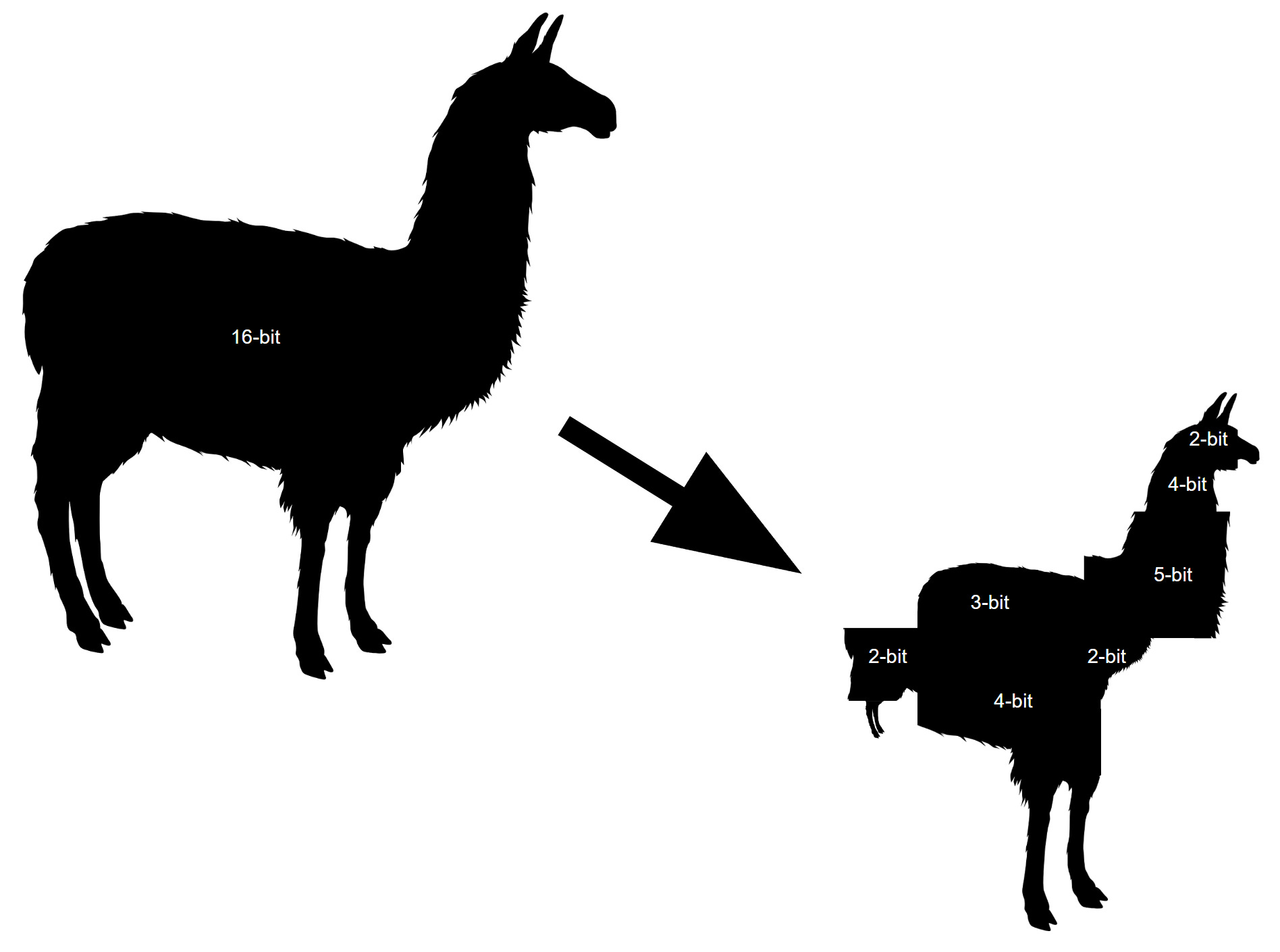

In this work we develop and release Llama 2 a collection of pretrained and fine-tuned large. Llama 2 is a family of pre-trained and fine-tuned large language models LLMs released by Meta AI in. We introduce LLaMA a collection of foundation language models ranging from 7B to..

Llama 2 is here - get it on Hugging Face a blog post about Llama 2 and how to use it with Transformers and PEFT LLaMA 2 - Every Resource you need a compilation of relevant resources to. Llama 2 is a family of state-of-the-art open-access large language models released by Meta today and were excited to fully support the launch with comprehensive integration. App Files Files Community 48 Discover amazing ML apps made by the community Spaces. This Space demonstrates model Llama-2-70b-chat-hf by Meta a Llama 2 model with 70B parameters fine-tuned for chat instructions. Llama 2 is a collection of pretrained and fine-tuned generative text models ranging in scale from 7 billion to 70 billion parameters This is the repository for the 70B pretrained model..

Run Llama 2 Chat Models On Your Computer By Benjamin Marie Medium

Comments